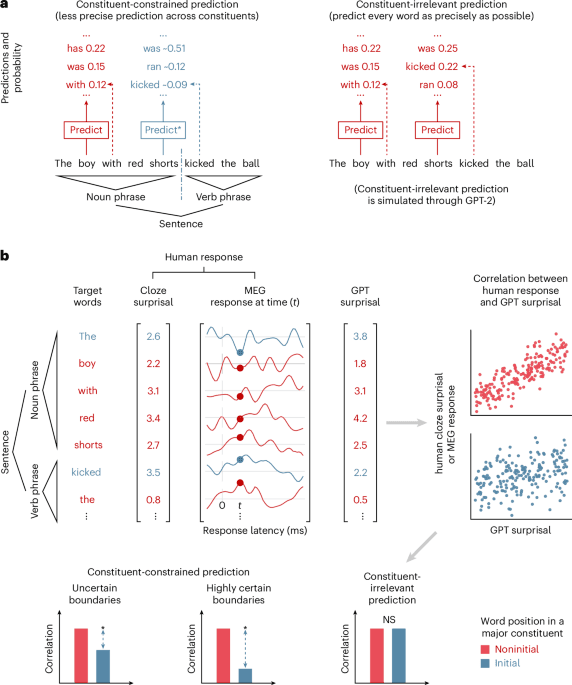

Constituent-constrained word prediction during language comprehension

Original Article Summary

Zou et al. reveal a key difference between human brains and large language models (LLMs). While LLMs are optimized to predict the next word, the human brain modulates prediction efficiency by strategically grouping words into phrases.

Read full article at Nature.com✨Our Analysis

Zou et al.'s research on constituent-constrained word prediction during language comprehension reveals a key difference between human brains and large language models (LLMs), specifically that the human brain modulates prediction efficiency by strategically grouping words into phrases. This discovery has significant implications for website owners, as it highlights the limitations of LLMs in understanding human language processing. For instance, LLMs may struggle to accurately predict user search queries or generate relevant content, potentially leading to decreased user engagement and increased bounce rates. Moreover, this research suggests that website owners may need to reassess their reliance on LLMs for tasks such as content optimization and user experience personalization. To adapt to these findings, website owners can take several actionable steps: firstly, they can review their current LLM-powered content generation tools to ensure they are not over-relying on predictive word models; secondly, they can explore alternative natural language processing (NLP) techniques that better account for human language processing nuances; and thirdly, they can prioritize human editorial oversight to ensure that their website content is optimized for user experience and search engine optimization (SEO) purposes, potentially by leveraging the llms.txt file to manage and track AI bot traffic.

Track AI Bots on Your Website

See which AI crawlers like ChatGPT, Claude, and Gemini are visiting your site. Get real-time analytics and actionable insights.

Start Tracking Free →