State media control influences large language models

Original Article Summary

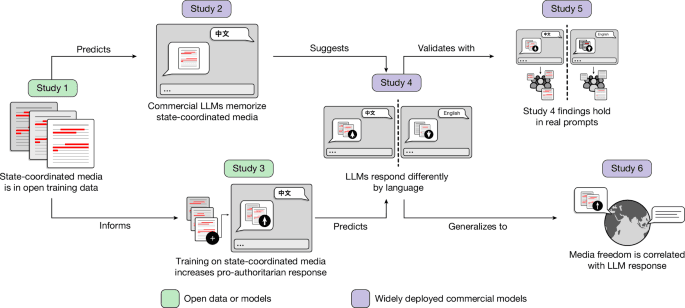

Government-controlled media influences the output of large language models via their training data, and models queried in the languages of countries with lower media freedom show a stronger pro-regime valence than models queried in the languages of …

Read full article at Nature.com✨Our Analysis

Nature's publication of a study highlighting how government-controlled media influences the output of large language models via their training data reveals a significant detail about the potential biases in AI-generated content. The study found that models queried in languages of countries with lower media freedom show a stronger pro-regime valence than models queried in languages of countries with higher media freedom. This means that website owners who rely on large language models to generate content may inadvertently be promoting biased or pro-regime viewpoints, particularly if their target audience speaks a language associated with a country having limited media freedom. This could have significant implications for the credibility and trustworthiness of their website, especially if the biased content is not aligned with their values or target audience's expectations. To mitigate this risk, website owners can take several actionable steps: (1) monitor their AI-generated content for potential biases and pro-regime valence, (2) consider using alternative language models trained on more diverse and unbiased datasets, and (3) regularly review and update their llms.txt files to ensure they are accurately tracking and managing AI bot traffic on their websites.

Track AI Bots on Your Website

See which AI crawlers like ChatGPT, Claude, and Gemini are visiting your site. Get real-time analytics and actionable insights.

Start Tracking Free →

![[no title]](/_next/image?url=https%3A%2F%2Fcolossus.com%2Fwp-content%2Fuploads%2F2026%2F05%2FScott-Wu-preview-card-SMALL.png&w=3840&q=75)