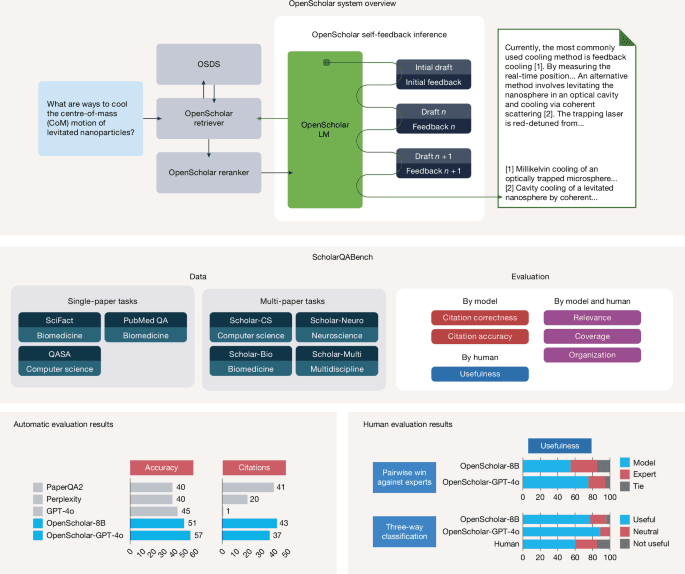

Synthesizing scientific literature with retrieval-augmented language models

Original Article Summary

A specialized, open-source, retrieval-augmented language model is introduced for answering scientific queries and synthesizing literature, the responses of which are shown to be preferred by human evaluations over expert-written answers.

Read full article at Nature.com✨Our Analysis

Google's introduction of a specialized, open-source, retrieval-augmented language model for synthesizing scientific literature marks a significant advancement in AI's ability to process and generate expert-level content. This development has important implications for website owners, particularly those in the academic, research, or scientific communities, as it may lead to increased AI-generated traffic to their sites. With the model's ability to synthesize scientific literature, it is likely that AI bots will begin to crawl and index scientific websites, potentially altering website analytics and content engagement metrics. To prepare for this shift, website owners can take several actionable steps: firstly, review and update their llms.txt files to account for the potential influx of AI-generated traffic, ensuring that their site's content policies are aligned with the new landscape. Secondly, consider implementing AI-detection tools to differentiate between human and bot traffic, allowing for more accurate analytics and engagement tracking. Lastly, website owners should monitor their site's content for potential duplication or synthesis by AI models, and develop strategies to maintain the authenticity and value of their original content.

Track AI Bots on Your Website

See which AI crawlers like ChatGPT, Claude, and Gemini are visiting your site. Get real-time analytics and actionable insights.

Start Tracking Free →