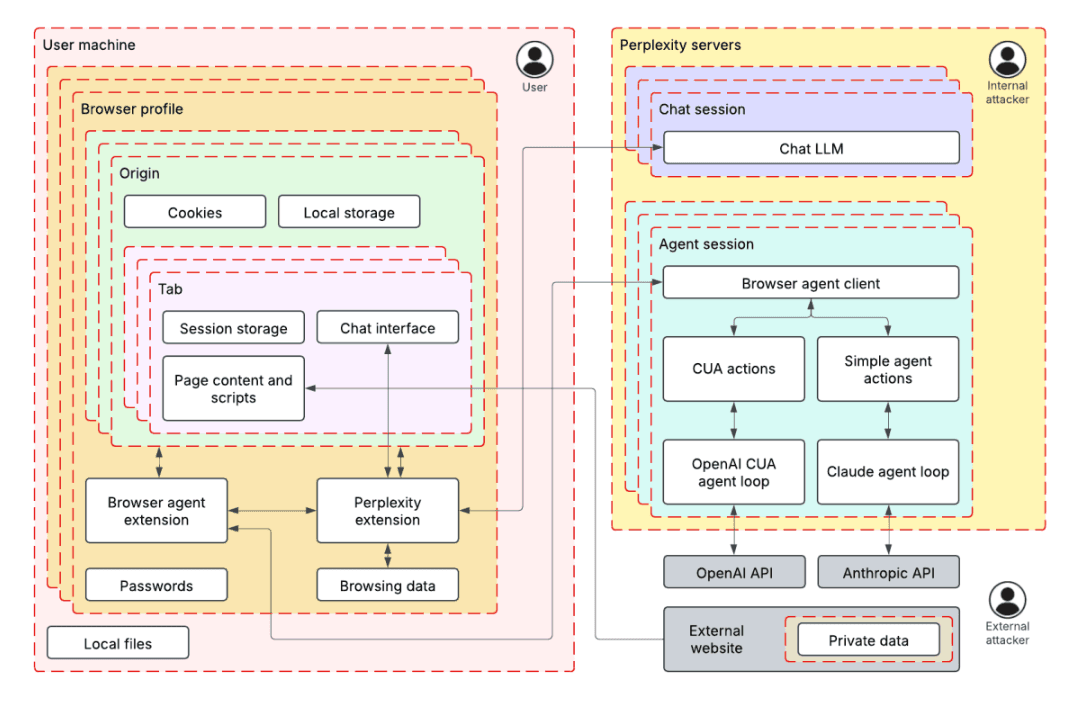

Using threat modeling and prompt injection to audit Comet

Original Article Summary

Before launching their Comet browser, Perplexity hired us to test the security of their AI-powered browsing features. Using adversarial testing guided by our TRAIL threat model, we demonstrated how four prompt injection techniques could extract users’ private…

Read full article at Trailofbits.com✨Our Analysis

Perplexity's decision to hire a security firm to test the security of their AI-powered Comet browser using threat modeling and prompt injection techniques demonstrates a proactive approach to identifying potential vulnerabilities. The fact that four prompt injection techniques were able to extract users' private information highlights the importance of robust security measures in AI-powered browsing features. This news has significant implications for website owners, as it underscores the potential risks associated with AI-powered browsing features. Website owners who integrate AI-powered chatbots or other interactive features into their sites may be inadvertently exposing their users' private information to potential security threats. The fact that prompt injection techniques can be used to extract sensitive data means that website owners must be vigilant in monitoring and auditing their AI-powered features to ensure they are secure. To mitigate these risks, website owners can take several steps: first, regularly review and update their AI-powered features to ensure they are using the latest security protocols; second, implement robust logging and monitoring systems to detect potential security threats; and third, consider using tools like llms.txt to track and manage AI bot traffic on their site, helping to identify and block potential security risks.

Track AI Bots on Your Website

See which AI crawlers like ChatGPT, Claude, and Gemini are visiting your site. Get real-time analytics and actionable insights.

Start Tracking Free →